Projects

A selection of my projects

Journey of Water @ Walt Disney World

Interactive water attraction inspired by Moana

Conducted computer vision, deep learning, and controls R&D for this attraction. So happy to see it bringing joy to so many people!

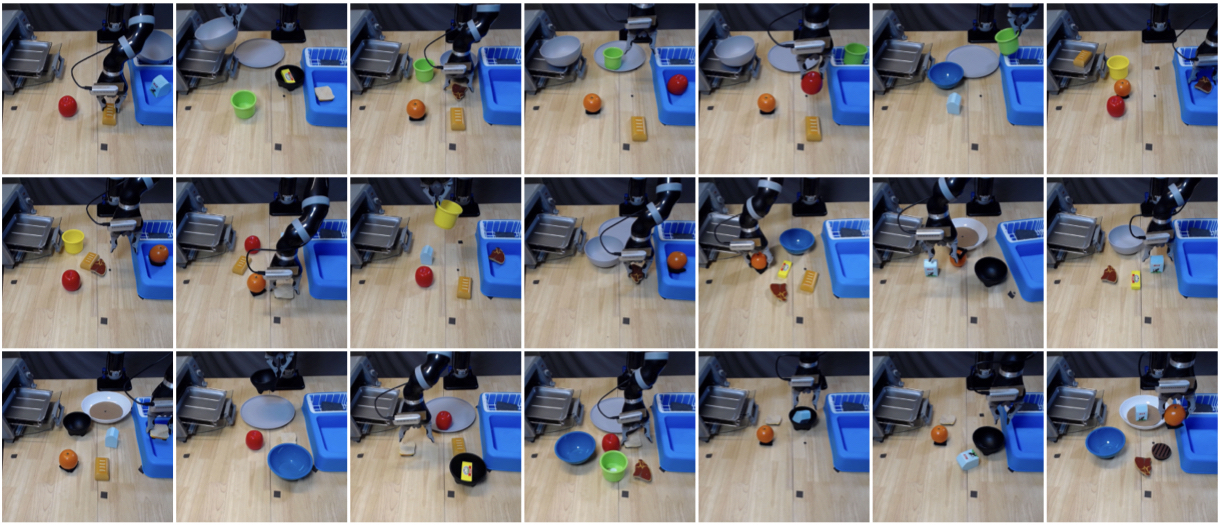

CLVR Jaco Play Dataset

Dataset of diverse robot trajectories from CLVR @ USC

Diverse multimodal dataset of 1085 teleoperated robot episodes. Includes multi-view camera observations, cartesian position and velocity data, and natural language annotations. Tasks include picking and placing of various items (of various categories) and receptacles.

Improving Language Understanding via Privileged Multimodal Training

Final Project for CSCI 535 (Multimodal and Probabilistic Learning for Human Communications)

Novel method to improve the performance of downstream unimodal language understanding tasks by leveraging multimodal data during training; employing optimization towards the task-specific objective while regularized against a multimodal teacher network in latent space.

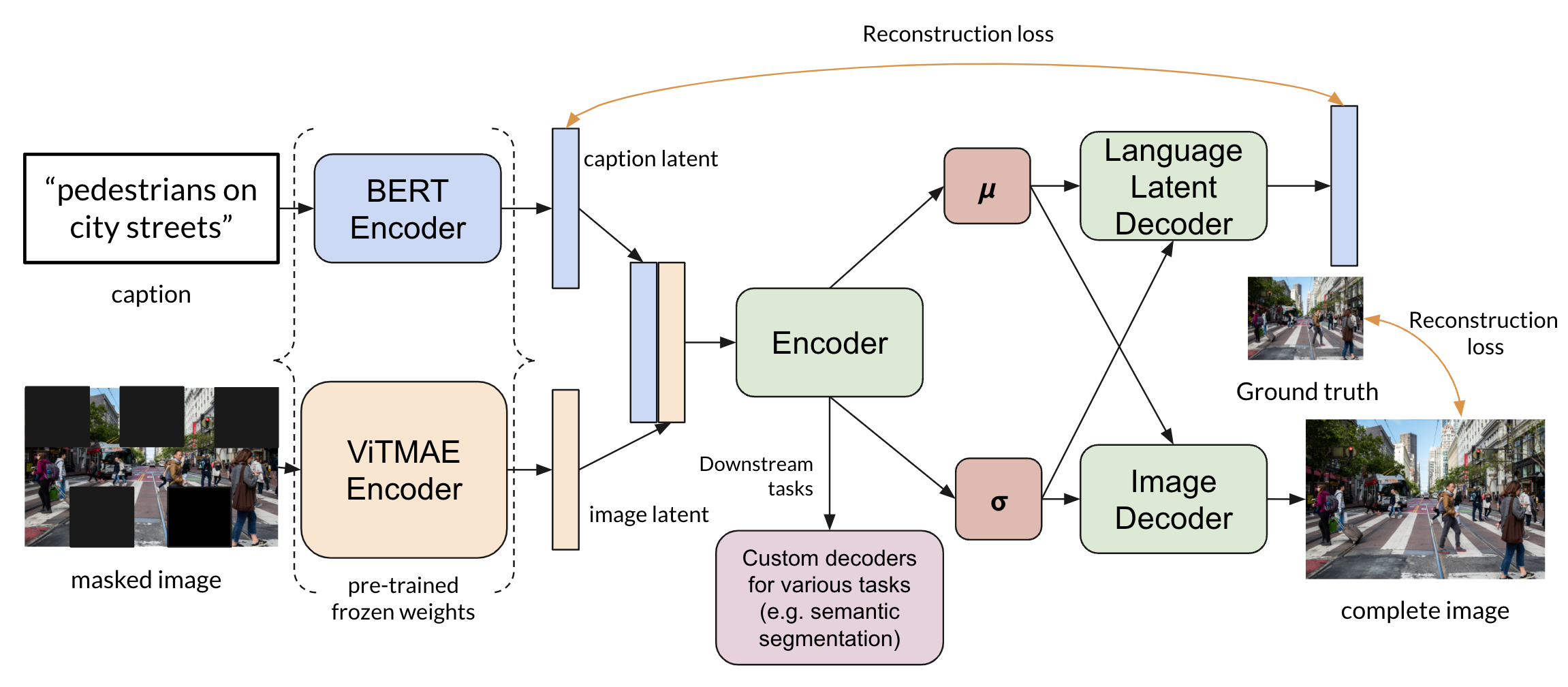

LCMVAE: Language-conditioned Masked Variational Autoencoder

Final Project for CSCI 566 (Deep Learning Applications)

A simple solution for multimodal representation learning, which takes advantage of modality-specific pre-trained encoders and a flexible, lightweight, latent-mixing network to effectively generate meaningful multimodal representations.

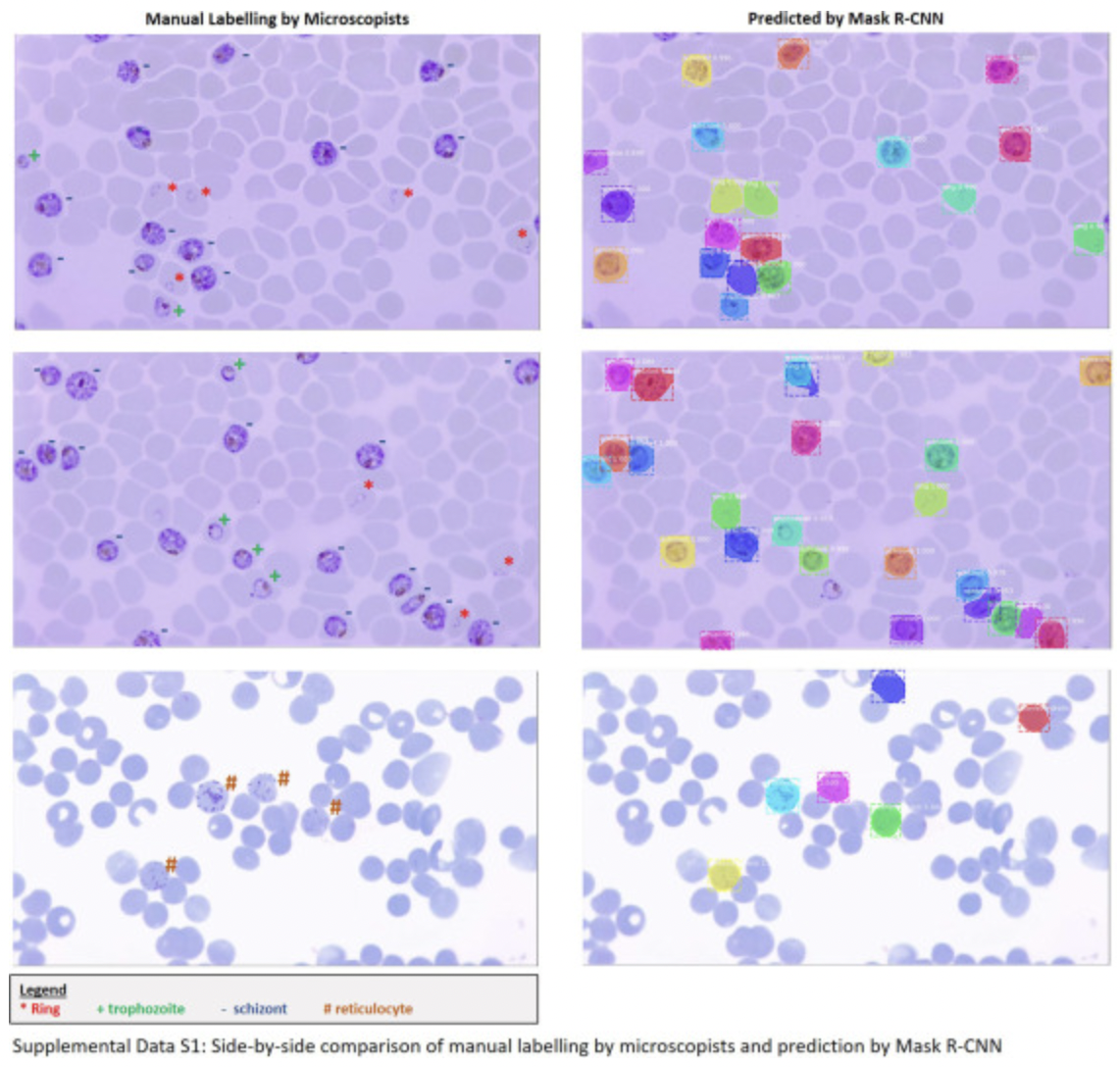

A deep learning approach to the screening of malaria infection: Automated and rapid cell counting, object detection and instance segmentation using Mask R-CNN

Published in Computerized Medical Imaging and Graphics

Application of a deep-learning model, Mask R-CNN, trained to identify healthy and Plasmodium-infected red blood cells. Capable of generating segmentation masks on top of bounding box classifications for immediate visualization and stage-specific identification. Potential to reduce errors common in manual counting through subsequent standardization.

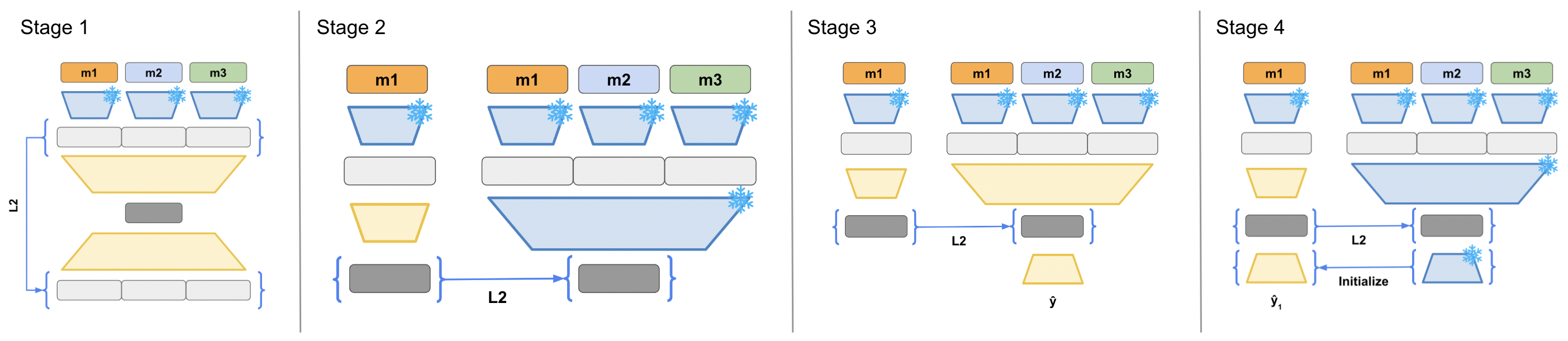

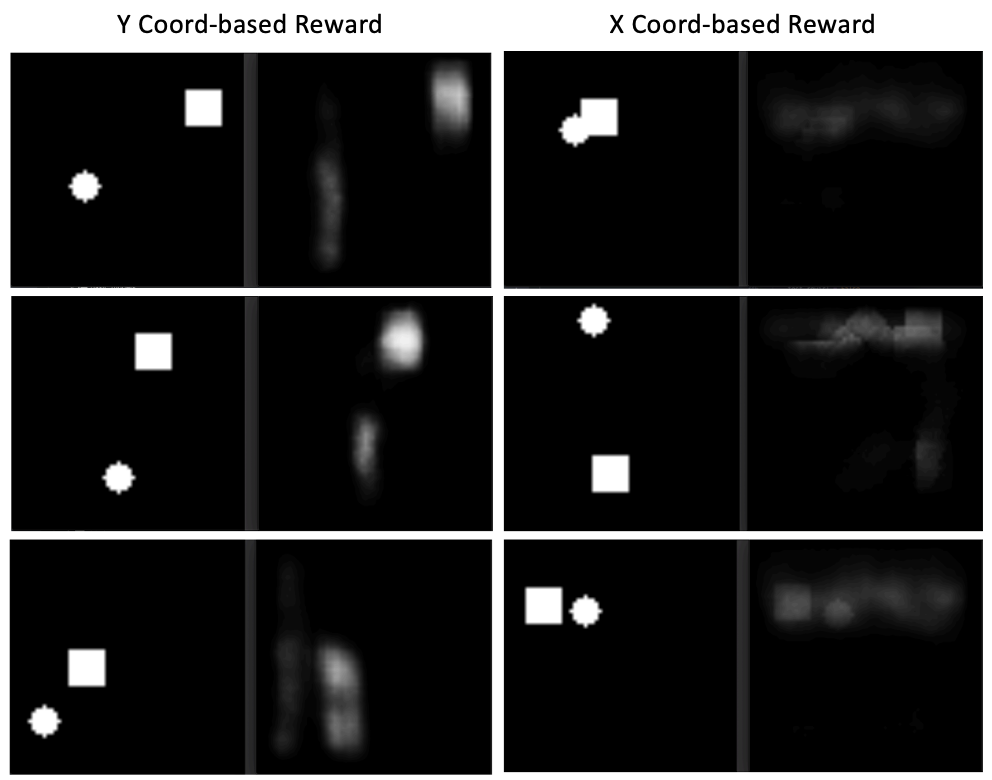

Reward-Induced Representation Learning

CLVR implementation challenge

Pre-trained predictive models on various tasks to facilitate RL training on a novel downstream task of the same distribution. The resulting reward-induced representation proved useful in both speeding up downstream PPO training, and improving final rollout performance.

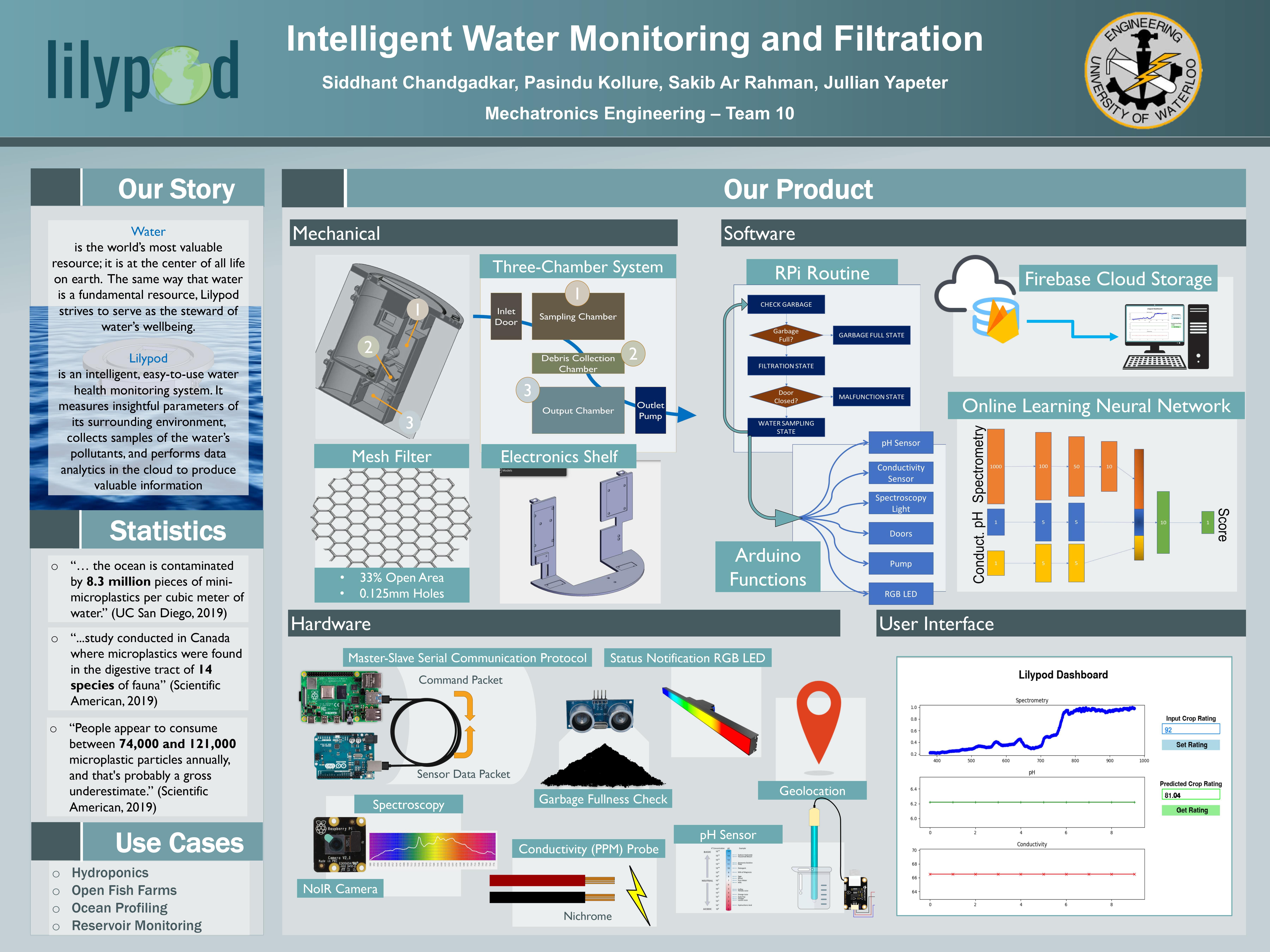

Lilypod

Undergraduate Mechatronics Capstone Project

AI-powered water monitoring system. Measures parameters of its surrounding environment, collects samples of the pollutants, and performs data analytics in the cloud to produce valuable information.

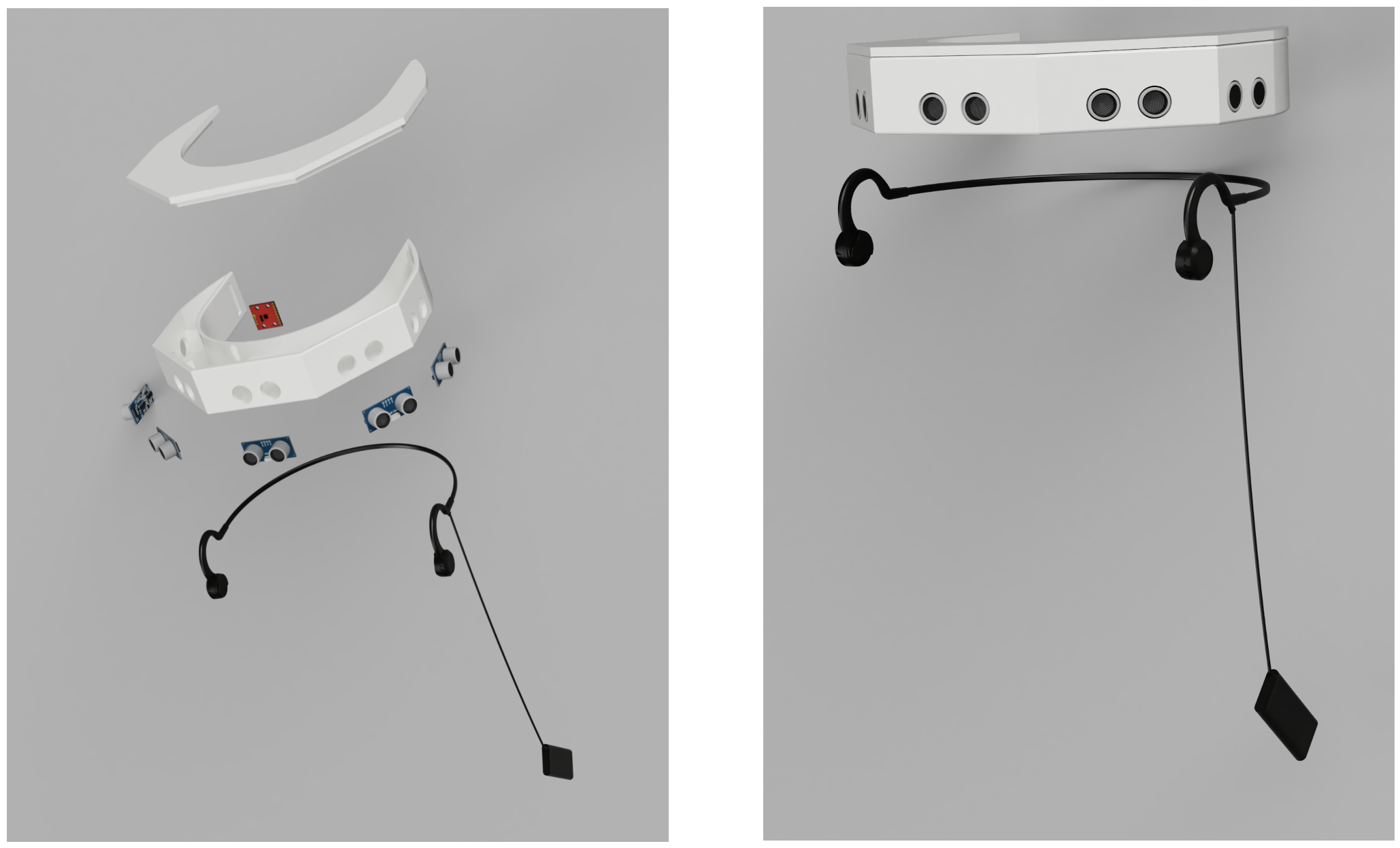

NaviGAIT

Vision for the blind, through positional sound

Final project for 30.007 Engineering Design Innovation course at SUTD. An ultrasonic-based wearable for the blind, using 3D positional audio feedback to assist in obstacle avoidance, and IMU-based fall detection and notification functionality over Twilio API. Won Best Demonstration Award at public project exhibition; awarded by industry guests.

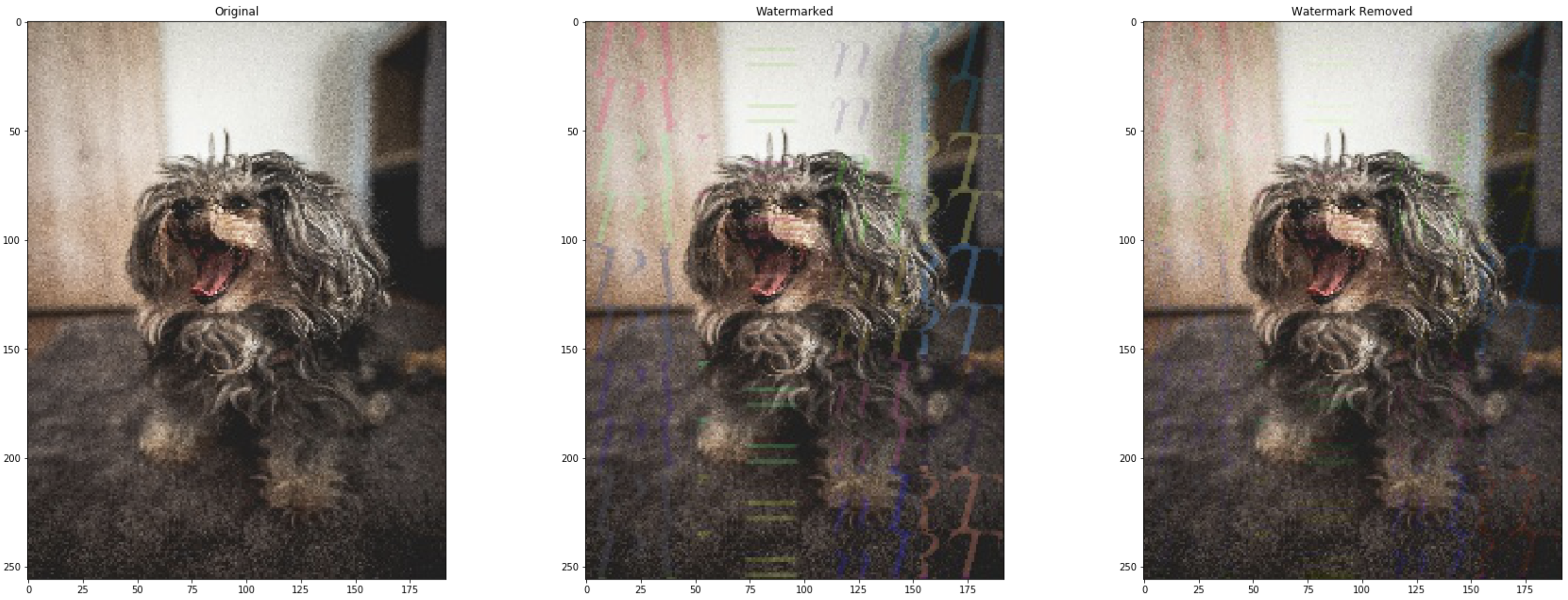

Automatic Watermark Removal

Final Project for CS 484 (Computational Vision)

Given a set of images with the same watermark, our method segments watermark boundaries (employing graph cut) and subsequently estimates the watermark's parameters (color + alpha-blending constant). It then removes the watermark using an inverse watermarking transform.

Portrait AutoColorizer

Final Project for SYDE 522 (Machine Intelligence)

This one started because I wanted to colorize my grandparents' old portraits. Our AutoColorizer fine-tunes a VGG16 network pretrained on ImageNet. It is then trained on a dataset of 200,000 celebrity portraits. We formulate the colorization process as the prediction of the AB channels given the L channel of an image in the LAB color space.